*Anonymous for privacy purposes

Background:

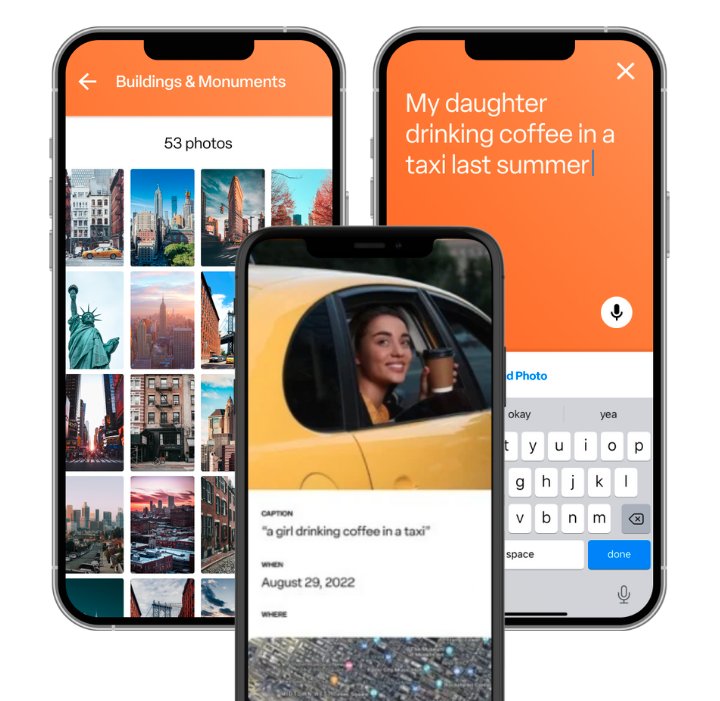

Our client aimed to solve the universal dilemma of finding and organising digital photos faced by individuals of all ages and across various devices and developed an AI image search and storage app, available on Android and IOS.

Before:

- Basic photo search functionality, relying on manual scrolling or on basic keywords with limited accuracy.

- Generic object detection, missing important features.

- Limited sorting and categorisation of photos into folders.

After:

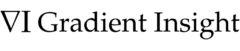

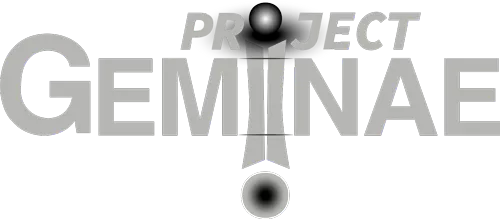

- System identifies all elements within an image (including people, location, colours and activities).

- Advanced, context aware search functionality: users search for photos using natural language and system will match it with the elements detected in images.

- Returns the most relevant images with minimal latency.

- Automatic creation of albums.

- Improved user satisfaction, offering a personalized and intuitive experience.

- The app received positive overwhelming positive reviews and had more than 50k downloads.

Process:

- Technology Assessment: Conducted an exhaustive analysis of cutting-edge AI models to select the most appropriate technologies for the image search app, considering factors such as accuracy, efficiency, and scalability.

- Custom Computer Vision Solution Development: Developed a bespoke Computer Vision Solution tailored to the client’s needs, featuring:

- Object Detection: Trained a sophisticated model to accurately identify various objects, people, and other elements within images, ensuring comprehensive image understanding.

- Image Captioning: Implemented an Image Captioning mechanism that generated descriptive sentences based on individual detections, facilitating intuitive organization and retrieval of photos.

- NLP Integration for Enhanced Search: Utilised Contrastive Learning techniques to seamlessly match user queries with image captions, enabling natural language-based search functionality that significantly improved user experience and search accuracy.