In the LLM era, detecting an object in an image isn't impressive. Any capable model does it. But doing that same thing in real-time — across over a thousand cameras simultaneously, running 24/7 in live US high schools and hospitals — that's a different problem entirely.

This is the story of how we built that system for Angel Protection: the three decisions that shaped the architecture, and why the obvious answer was wrong every time.

Prefer to watch? The full video walkthrough is above. The post goes deeper on implementation detail.

First: why not just use an LLM?

LLMs handle images now. The question is legitimate. Here's the answer in one table.

Running 10 cameras at 2 frames per second costs $2,000 every two hours with a frontier LLM. That same $2,000 buys the hardware that runs a purpose-built detection model for years across those same ten cameras. LLMs are general-purpose tools. This is not a general-purpose problem.

Decision 1: Edge over Cloud

Angel Protection's instinct was already correct. Three forces confirm why.

When Angel Protection came to us, they had a system in mind: cameras at each location feeding a local server, that server runs the model, detections go up to the cloud for 911 alerts. Their question was whether that held at scale, or whether cloud processing made more sense.

The cloud had a genuine case — elastic scaling, less on-site hardware, push model updates without touching physical machines. For a lot of systems, cloud is the right call. We worked through the numbers to find out if this was one of them.

Bandwidth

Latency

Privacy

Cost at scale

The number that settled it

One 1080p frame is 1.9 MB. A hundred cameras at 2fps — that's 380 MB/s continuously, from every location. Schools and hospitals don't have that kind of uplink, and where they do, you're competing with everything else on the network. On the edge, the only thing that ever leaves the building is a timestamp and a cropped image.

"Latency and privacy just confirmed what the bandwidth number had already decided. Cloud inference adds a full network round-trip on top of model processing time — when you're detecting a weapon in a school corridor, that round-trip costs real seconds."

Decision 2: Distributed over Monolithic

Edge was settled. The first version of the architecture had a problem we didn't expect.

The first version was one machine per location — CPU and GPU in the same box, handling everything: decoding the RTSP streams, running motion detection as a pre-filter, then running the model on frames that had actual movement.

Motion detection was a smart first optimisation. Most of the time a corridor is empty, so most frames never reach the model. But running that check across thousands of camera feeds every second is constant CPU work — and decoding raw compressed video streams sits on top of that.

Version 1 — Resource utilisation

Stream decoding + motion detection — CPU never got a break

The most expensive component — sitting idle, waiting for frames

The CPU was always near 100%. The GPU — the part actually running the model — was sitting below 30%. The most expensive component in the whole setup was basically idle. We were paying GPU-machine prices and the GPU was barely working. At this scale, that makes the whole project much more expensive than it needs to be.

We were already running high-spec CPUs — scaling up wasn't the answer. So we changed the shape of the system entirely.

Architecture: Before → After

v1 — Monolithic (one machine does everything)

v2 — Distributed (split the work)

alerts

The result of splitting CPU and GPU work

1/10th

the hardware cost

Not from a better model or smarter cloud setup — just from deciding that decode and inference shouldn't live on the same machine.

Decision 3: Fine-tuned over Pre-trained

A brilliant architecture means nothing if the model gets it wrong. This is the part most people underestimate.

The model receives an image and tells you whether any target objects are present. The assumption many projects make: grab something pre-trained on a standard dataset — COCO, Open Images — point it at the feed, and you're done.

We found two specific gaps that ruled that out for this deployment.

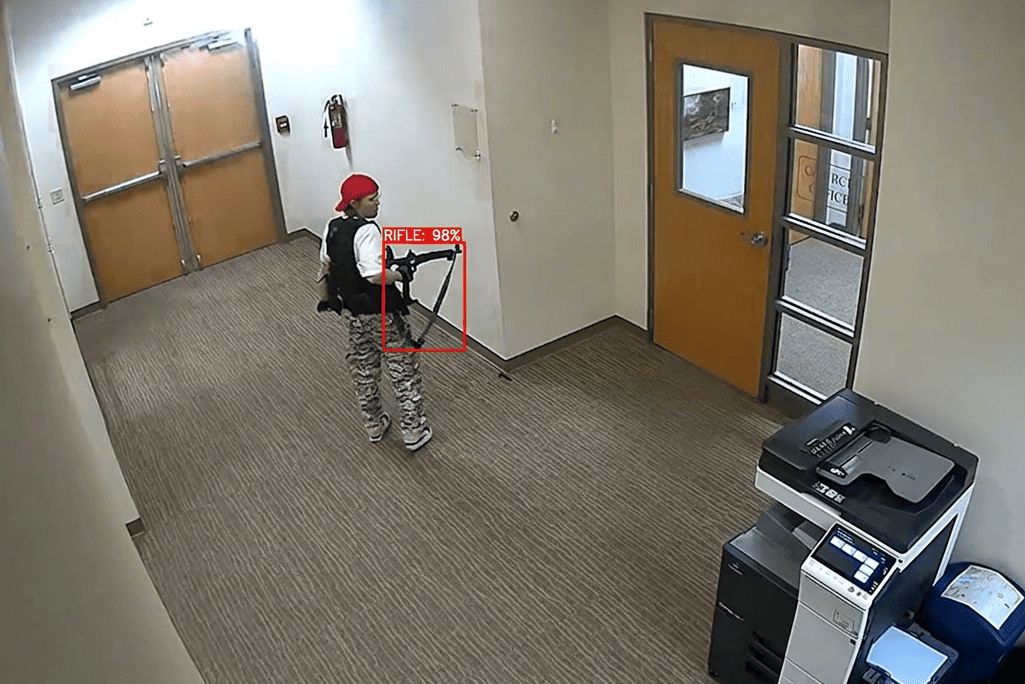

GAP 1 — PERSPECTIVE

CCTV cameras look down. Datasets don't.

Models trained on press photos have never seen a weapon from above at corridor distance. The perspective gap is total.

GAP 2 — OBJECT SIZE

At camera distance, it's ~30 pixels wide.

Detecting something 30 pixels wide under real low-light conditions is a task standard models were never optimised for.

So we fine-tuned. Fine-tuning means you take a model already trained on a large dataset — which gives it a general understanding of the visual world — and then continue training it on your specific domain data. The base model already knows what a weapon looks like in general. You're teaching it what one looks like specifically in your deployment conditions.

We built our own dataset: CCTV-angle images, sourced from other datasets, search engines, social media, and news footage. Around 1,500 images per class is a good starting point — but quality matters more than quantity.

Annotation quality is the overlooked multiplier

Box hugs the object — model learns the exact shape

Box includes background — model learns noise

Tight, accurate annotations on 1,500 images will outperform sloppy annotations on 5,000. The model learns exactly what you draw. This is where most DIY fine-tuning efforts fail.

"We went deep enough into this that we ended up implementing YOLOv10 from scratch in PyTorch — full control over the architecture, no licensing constraints, optimised for the exact conditions this system runs in."

Results

The system is live. These are the numbers from production.

"The architecture developed delivered outstanding threat detection accuracy while significantly reducing costs. Any company looking to push the boundaries of what's possible with AI would be fortunate to work with them."

Open Source: AngelCV

A side effect of going deep enough to build from scratch.

We implemented YOLOv10 in PyTorch from scratch — full architecture, no third-party weight dependencies, no AGPL licensing constraints. The client let us open-source it. It's called AngelCV.

Apache 2.0

Commercial use, free

YOLOv10

Built in PyTorch

Sensible defaults

Works out of the box

Simple interface, sensible defaults. Works out of the box. Apache 2.0 — use it commercially, free.

GitHubThe open source was a side effect. The actual goal was a model that works at 2am in a school corridor, picking up something the size of a postage stamp in a grainy top-down feed. And it does.

The three decisions — summed up

Edge over Cloud

Bandwidth alone ruled cloud out. Latency and privacy confirmed it. Only alerts leave the building.

Distributed over Monolithic

CPU was the bottleneck. Split decode and inference across cheap commodity nodes → GPU runs at capacity → 1/10th the hardware cost.

Fine-tuned over Pre-trained

Public datasets don't have top-down CCTV perspective or sub-50px weapon images. Domain-specific data + tight annotations changed accuracy entirely.

None of those were the default answer. They only make sense when you look closely at the actual constraints of the deployment. That's what bespoke design gives you — not just better numbers, but a system that couldn't have been built any other way.

Gradient Insight

Cameras deployed and not doing enough?

Security, manufacturing, logistics — if cameras are already running and not extracting value from what they see, this is the kind of project we take on. Free discovery call, no commitment.