A line producing 2,000 units per shift with a 3% error rate ships 60 defective units every eight hours. Multiply that by your cost per returned item, warranty claim, or rework order, and the number gets uncomfortable fast.

Computer vision doesn't get tired. It doesn't have Mondays. It checks the same 24 parameters at the 2,000th unit exactly as it checked them at the first — in under 200 milliseconds.

This guide is for technical founders and operations leads at SMEs who want to understand exactly how these systems work, what the implementation journey looks like, and how to calculate whether the investment makes sense before spending a pound.

What Computer Vision Quality Control Actually Checks

A well-designed CV system can validate six categories of conditions simultaneously.

Presence / Absence

Complexity: LowIs the component there? Is the label applied? Is the cap seated? Binary checks — but the most common source of costly misses.

Count Verification

Complexity: LowAre there exactly 12 blister pockets? Six bolts in the kit? Three documents in the envelope? Hard for fatigued humans, trivial for a trained model.

Alignment & Orientation

Complexity: MediumIs the barcode scannable? Is the connector seated correctly? Alignment failures cause expensive downstream automation failures.

Colour Matching

Complexity: MediumIs this the correct label variant? Is the product the right formulation colour? Highly reliable once calibrated with proper lighting.

Dimensional Measurement

Complexity: Medium-HighIs the gap within tolerance? Modern vision systems measure to sub-millimetre accuracy at full production line speed.

Surface Defect Detection

Complexity: HighScratches, dents, foreign objects, contamination. The most technically demanding category — and the highest value, since defects that escape become warranty claims.

"Surface defects are the most technically demanding to detect — and the highest value to catch. A scratch that escapes the line becomes a warranty claim."

The Hardware Reality

The good news: you almost certainly don't need a £200,000 machine vision installation. The revolution in industrial CV has been driven by three simultaneous trends:

- Modern architectures like YOLOv10 run real-time inference on edge hardware costing under £500

- Industrial cameras suitable for most applications cost £100-£1,500

- Lighting — often the most overlooked component — can be engineered correctly for £200-£800

Typical single-station hardware budget:

Training and integration are typically the larger cost — but they're also where the value is built.

Choose Edge when:

- Real-time pass/fail at the line

- GDPR obligations on product imagery

- Network reliability not guaranteed

- Latency under 100ms required

Consider Cloud when:

- Batch reporting is acceptable

- Synchronised updates across many stations

- Volume exceeds local compute capacity

The Four Phases of Implementation

What a real 6-week engagement actually looks like week-by-week.

Weeks 1-2

Problem Definition & Data Design

- Define acceptance criteria precisely in writing — what is a pass, fail, or marginal?

- Map all SKU variants (determines model complexity)

- Establish production rate (determines inference speed requirement)

- Survey camera mounting options and lighting constraints

- Capture baseline false positive / false negative rates

Weeks 2-4

Data Collection & Annotation

- Collect 200-500 images per class per condition for initial training

- Capture defects deliberately — lines don't naturally produce enough examples

- Annotate with your domain expert, not just the data engineer

- Include lighting variation, orientation variation, and documented edge cases

Weeks 4-5

Model Training & Validation

- Split: 70% training / 15% validation / 15% held-out test

- Prioritise False Negative Rate — defects you miss cost more than false rejects

- Review confusion matrix with domain experts, not just data scientists

- With YOLOv10 on a modern GPU, training takes hours to days, not weeks

Weeks 5-6

Integration & Go-Live

- PLC signal integration (pass/fail output to line control)

- Build operator UI with visual overlays explaining each rejection

- Define edge case handling: what happens when the camera is obscured?

- Set up monitoring: how will you detect model performance drift?

"Phase 1 is where most implementations succeed or fail. If three human inspectors disagree on 15% of the same samples, your training data will reflect that confusion."

ROI Calculation Framework

Before commissioning any work, run this calculation. It takes 15 minutes and tells you whether this is worth exploring.

Step 1

Quantify your current defect cost

Annual defect cost = (defects per day × working days) × average cost per escaped defect Cost per defect varies widely: consumer goods rework £2-5; automotive warranty claims £500-5,000; pharma recalls are catastrophic.

Step 2

Quantify your inspection labour cost

Annual inspection labour = inspectors × hours per day × working days × hourly cost Include management overhead and re-inspection time, not just direct labour.

Step 3

Model the AI reduction

Typical CV system achieves: 85-98% reduction in escaped defects + near-elimination of direct inspection labour + 15-40% throughput improvement Step 4

Calculate payback period

Payback (months) = total implementation cost ÷ monthly savings For most SME deployments in the £15,000-£60,000 range, payback is 6-18 months.

Six Questions to Ask Any Vision System Vendor

A vendor who can't answer these clearly hasn't thought through production deployment.

Can you show me a confusion matrix from a comparable production project?

What is your methodology for novel defect types the model wasn't trained on?

How does an operator override a system rejection?

What monitoring exists to detect model drift over time?

What happens when production adds a new SKU variant?

Who owns the trained model weights at the end of the engagement?

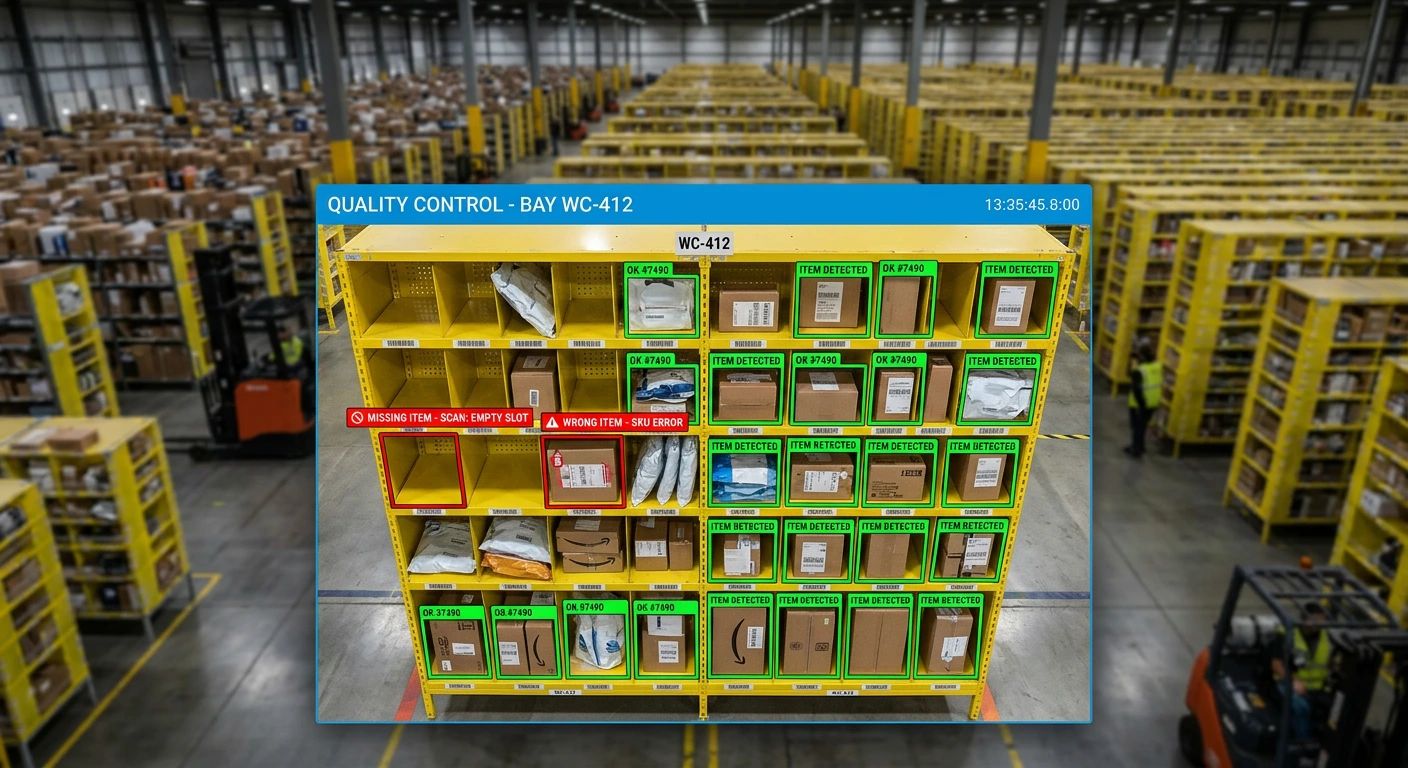

The Explainability Imperative

One pattern that kills quality control deployments: black-box outputs with no human oversight path. When the system rejects an item, operators need to understand why. Is it a genuine defect or a false alarm? Can the production team act on it?

Visual overlay

Show which detection triggered the rejection — not just 'FAIL'

Confidence score

Display the model's certainty alongside the decision

Rejection capture

Auto-save images of rejected items for model improvement

Override + reason code

Operators must be able to override, with a mandatory reason for your feedback loop

This isn't just good UX — it's how you continuously improve the model and maintain operator trust. A system that operators don't trust gets switched off. Explainability is how you protect your investment.

Gradient Insight

Ready to scope a CV quality control system?

We prototype in 6-8 weeks. Bring your production line specs and we'll tell you whether a CV system makes economic sense before you commit to a full build.