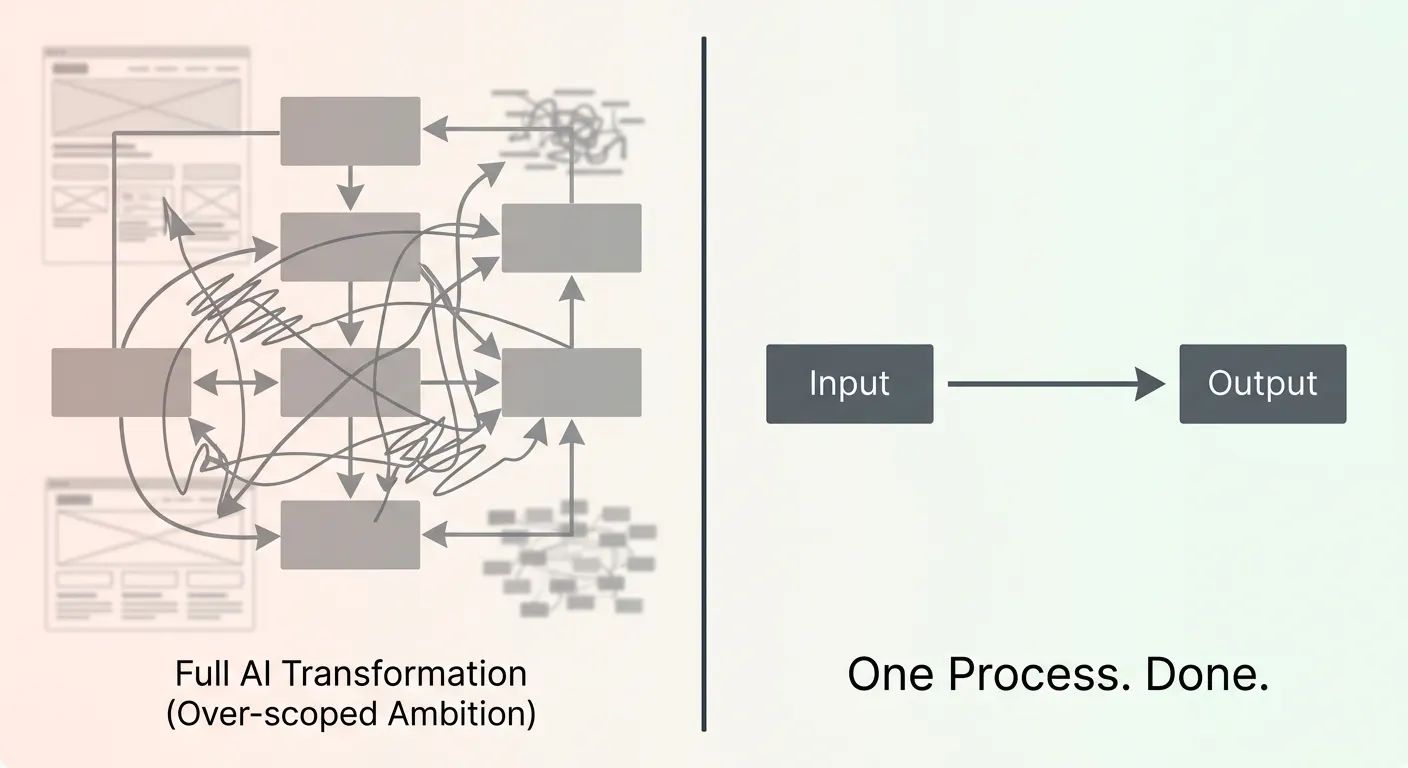

The pattern that kills most AI projects in SMEs isn't complexity. It's ambition without scope.

The brief arrives: "We want to automate our entire customer communications workflow." Weeks pass. A vendor produces a 40-page proposal. The price is £180,000. The timeline is 14 months. The board says no. Everyone concludes AI is too expensive.

The project that should have happened: identify the most painful, most repetitive, most well-defined subprocess. Build a working prototype in four weeks. Measure it against the baseline. If it works, extend it. If it doesn't, you've spent four weeks — not fourteen months.

The 80/8 Rule

80% of the efficiency gain from 20% of the scope, delivered in 6–8 weeks. The remaining 20% of gains takes 80% of additional time and budget. Start with the first 80%.

Why Most AI Projects Fail Before They Start

Wrong problem selection

What it sounds like:

"We want AI to improve customer satisfaction."

What it should sound like:

"We want to classify the 400 daily support emails we receive by urgency, routing them to the right team within 2 minutes."

The second has a defined input, defined output, a baseline metric, and a clear test condition. The first is an aspiration.

Waiting for perfect data

What it sounds like:

"Our data isn't clean enough yet."

What it should sound like:

The data you have is never perfect. Treat the prototype phase as data collection infrastructure.

You build the pipeline, and the real data starts flowing. Perfect data comes after the prototype, not before.

Building for scale before proving value

What it sounds like:

"We need this to handle 1 million records."

What it should sound like:

You have 50,000 today. Prove it works on 50,000. Then scale.

Scale introduces cost and complexity. Only add them once the core system has proven its value on real production data.

Technology-first thinking

What it sounds like:

"We should use an LLM for this."

What it should sound like:

What's the simplest system that could possibly work? Start there.

Gradient-boosted trees outperform LLMs on tabular data. Rule-based fallbacks outperform probabilistic models for well-defined routing. Choose boring tools for boring problems.

Finding Your First Win: The Boring Process Audit

The best first AI automation is usually the most boring process in your business. Run this three-step audit to find it.

Step 1 — List every manual task that happens more than 20 times per week

Examples: filing documents, copying data between systems, responding to template emails, checking inventory levels, reviewing supplier invoices, sorting inbound leads, generating status reports, manually tagging support tickets.

Step 2 — Score each task on three dimensions

Frequency

How many times per week does this task occur?

Variance

How different is each instance from the last?

Defined output

Can you write 'done' in one sentence?

"High frequency × low variance × clear output = your first automation target. That formula never fails."

Step 3 — Identify the measurement baseline

What's the current cost in time or money? You need a number to beat. Without a baseline, you can't prove success — and you can't make a capital allocation argument to your board. Time the current process. Count the errors. Get a number.

The Three Phases of a 6-Week AI MVP

Week by week, what this actually looks like in practice.

Days 1–10

Needs Immersion

This isn't discovery theatre. It's documentation with teeth.

- Current process map (swimlane diagram, not theory)

- Baseline metric: time per task × volume × hourly cost

- Data inventory: what exists, in what format, going back how far?

- Acceptance criteria: a test set you and your operator agree represents the real task

- Scope boundary: what is explicitly OUT of scope for the prototype

The hardest part of this phase is the scope boundary. Every stakeholder will try to expand it. Your job — and your vendor's job — is to hold the line.

Days 11–28

Working Prototype

The prototype is not a demo. It's a working system operating on real data.

- Ingests data in the format it actually arrives — not a cleaned CSV

- Produces output in the format the team actually needs

- Has a mechanism for operators to flag errors (this is your improvement data)

- Runs on actual infrastructure, not a researcher's laptop

- Processes a representative sample of real production data

Days 29–42

Validation

Measure the prototype against your baseline with real production data.

- Accuracy metric measured on held-out test set (not demo data)

- Error profile: what types of inputs does it struggle with?

- Run alongside humans for at least one week before replacing them

- Failure mode catalogue with root causes

- Go/no-go recommendation with evidence — not opinions

Practical approaches by task type:

What "Done" Looks Like at Week 6

A prototype is done when you can answer yes to all of these. If you can't, you don't have a prototype — you have a demo.

It operates on real, unmodified production data

The accuracy metric has been measured on a held-out test set

At least one real user has used it for real work

You know the three conditions under which it fails

You have a baseline number to compare against

How to Evaluate an AI Vendor

Signs of a good fit

Red flags

Making the Internal Business Case

CFOs speak one language: payback period. The pitch isn't "AI will transform our business." It's this:

"This process currently costs us £X per month in labour and errors. The prototype costs £Y and takes 6 weeks. If it works as expected, we recoup the investment in Z months. If it doesn't, we're out £Y and 6 weeks — with a clear understanding of why, which informs what we try next."

That's a capital allocation decision, not a technology bet. It has a defined downside (known cost, known time), a defined upside (measurable), and an explicit learning outcome even in the failure case. Present it accordingly.

The pilot-then-scale pitch removes the biggest barrier to starting: the fear of committing to something with an uncertain outcome. A 6-week prototype is a bet with a clear ceiling. That's a very different conversation from a 14-month transformation programme.

Gradient Insight

Ready to find your first automation win?

Book a 30-minute discovery call. Bring your top three manual processes. We'll tell you which one is most automatable, what a prototype would look like, and what a realistic ROI looks like — before you commit to anything.